I'll be direct: responsible AI isn't a values statement for us. It's an architectural requirement. The difference matters more than most organizations realize.

When generative AI took off in 2023, the pressure to move fast was real — and we moved. But as adoption scaled, a harder truth emerged. The "move fast and break things" playbook doesn't survive contact with enterprise-grade work. When AI is embedded in how 85% of the Fortune 500 manages its most critical programs, the cost of getting it wrong isn't just a bad user experience — it's eroded trust, compliance exposure, and AI investments that stall before they can scale.

According to IBM's 2025 Cost of a Data Breach, 63% of organizations still lack AI governance policies. That number tells you something important: most companies are still treating governance as something they'll add later. We made a different bet — that governance built into the architecture from day one is actually what makes AI faster and more trustworthy, not slower.

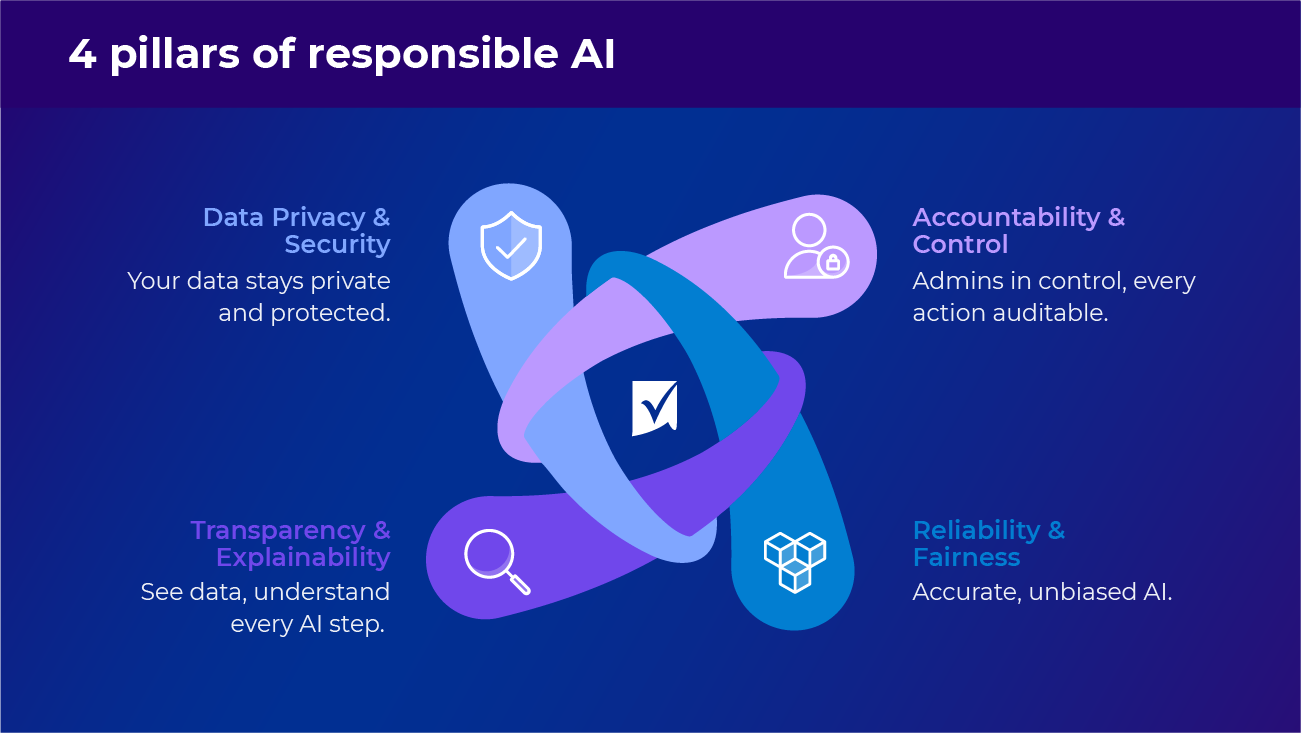

Our approach is organized around four pillars. Together, they define the standards we apply to every AI capability we build and the expectations we set for every partner we work with.

Our non-negotiable commitments for Smartsheet AI

1. Data privacy and security: your data stays yours

This is the first principle for a reason. Your data never mixes with other customers' data. It never trains third-party foundation models. It never leaves your control. Full stop.

In practice: all AI features adhere to the same compliance standards that govern the broader Smartsheet platform — such as SOC 2, GDPR, and data residency requirements. Data is encrypted in transit and at rest, content filtering safeguards are applied, and AI operates within your existing permission structures. If a user doesn't have access to a sheet, the AI doesn't either.

We made a deliberate architectural decision not to use customer content to train third-party foundational models — even in aggregate. That's a harder line than a lot of vendors draw, and it's one we're not moving off.

2. Accountability & control: no black boxes

Enterprise AI that can't be explained, audited, or controlled is a liability, not an asset. Every AI action in Smartsheet will be fully auditable — by end users who can review, evaluate, and approve AI-generated changes, and by system administrators with centralized visibility across the platform.

System admins will have full control over which AI features are enabled and for whom, and Workspace admins will have granular controls over which data AI can access within their workspaces. This is enforced at the architecture layer, not just the UI.

I think of this as the 'expert in the middle' principle. AI analyzes and recommends. Humans decide. Especially on anything that carries real organizational consequences.

That pattern — AI as a recommendation engine with human judgment at the decision point — is how we've designed every agent and AI feature we're building. It's not a limitation of the technology. It's a deliberate choice about how enterprise AI should actually work.

3. Reliability & fairness: consistent, trustworthy outcomes at scale

Pew Research found that 55% of both AI experts and the public say they are highly concerned about bias in AI decision-making — and they're right to. Bias in AI systems is rarely obvious, and it compounds over time. We've built bias detection and ongoing model evaluation into our development process as a continuous requirement, not a pre-launch checklist item.

We continuously monitor AI response quality through automated monitoring, model testing, and direct user feedback. Our targets: sub-3% hallucination rate, above 80% task completion, sub-3 second response times. These thresholds gate what we ship. If a capability doesn't meet them, it doesn't go out.

4. Transparency & explainability: you should always know what the AI is doing and why

When you're interacting with AI in Smartsheet, you'll know it. When data has been generated or transformed by AI, it's identified as such. This isn't just a disclosure requirement — it's foundational to trust.

More importantly, when AI produces a recommendation, you can understand the reasoning: what data it used, what patterns it detected, and how it got there. For regulated industries, the ability to show your work — to regulators, auditors, and CISOs — is increasingly non-negotiable. We're building with that bar in mind

What this looks like in the Smartsheet platform

Principles are easy to publish. What's more useful is what they actually translate to in product decisions. Here's how our four commitments show up in what we're building:

- Human-in-the-loop, always. Every new AI capability ships with checkpoints and approval steps built in. You decide where and when to apply AI. The AI doesn't run ahead of you on anything consequential.

- Clear identification of AI-generated content. Explicit indicators across the platform for every AI feature and its output — in dashboards, sheets, and Smart Assist interactions. No ambiguity about when you're looking at AI-generated content.

- Full auditability and traceability. For Smart Assist and Smart Agents, a complete audit trail — what happened, when it happened, and how it was initiated. Admins get centralized records across the platform; end users can review what AI performed within their work. This is the compliance story that CISOs and legal teams need.

- Centralized control over AI. System admins set the boundaries for their organization's instance — which features are enabled, for whom, and what data AI can touch. This is the first thing we design, not the last.

- Usage monitoring and analytics. Beyond audit logs, admins get full usage analytics to track how teams are using AI and measure business impact across workflows. Over time, this becomes a key part of demonstrating AI ROI to leadership.

Choosing partners who share these values

Your responsible AI posture is only as strong as the least accountable player in your stack. That's why partner selection isn't a procurement decision for us — it's a strategic one.

We chose AWS and Amazon Bedrock in part because their responsible AI commitments align with ours. AWS addresses responsible AI across ten dimensions: controllability, privacy, security, safety, fairness, veracity, robustness, explainability, transparency, and governance. That's a framework we can build on, not around.

Concretely, Bedrock extends our commitments with:

- Customer data never stored or shared; API calls remain within their call region

- Data encrypted in transit and at rest

- 20 compliance standards met, including SOC, ISO, PCI, and GDPR

- Bedrock Guardrails for sensitive information protection and topic filtering

- Bedrock Evaluations checking for robustness, accuracy, bias, and hallucination

We apply the same bar to every AI partner in our stack. Building responsible AI isn't a Smartsheet-only effort — it requires the whole ecosystem to hold the line.

The bottom line: governance is what makes fast AI sustainable

Here's the counterintuitive thing I've come to believe: organizations that treat AI governance as an unwelcome constraint end up building slower. If AI can’t be explained, audited, or controlled, it’s not a capability — it’s a liability that will eventually make you slow down ... or pause deployment altogether.

The enterprises winning with AI aren't skipping governance. They're the ones who embedded it early enough that it never slowed them down.

At Smartsheet, responsible AI by design means we're building on a foundation that scales — supporting Fortune 500 security requirements, hundreds of thousands of users, and the inevitable AI regulatory changes coming in 2026 and beyond. Aligning with frameworks like the EU AI Act and ISO/IEC 42001 is about more than checking a box — they’re important signals to enterprise buyers that we're prepared and have thought through what's coming.

The potential of AI in intelligent work management is massive. But that potential is only realized if enterprises can deploy AI they actually trust. Security, privacy, governance, and transparency aren't things we layer on top — they're the foundation. Everything we build starts there.

Learn more about Smartsheet AI

Drew Garner leads AI & Platform Strategy at Smartsheet, overseeing the Applied AI organization and the company's transformation into an intelligent work platform.