What Is a Voice Assistant?

To call any technology that makes our lives easier by one name is almost impossible. There are a variety of terms that refer to agents that can perform tasks or services for an individual, and they are almost interchangeable — but not quite. They differ mainly based on how we interact with the technology, the app, or a combination of both.

Here are some basic definitions, similarities, and differences:

-

Intelligent Personal Assistant: This is software that can assist people with basic tasks, usually using natural language. Intelligent personal assistants can go online and search for an answer to a user’s question. Either text or voice can trigger an action.

-

Automated Personal Assistant: This term is synonymous with intelligent personal assistant.

-

Smart Assistant: This term usually refers to the types of physical items that can provide various services by using smart speakers that listen for a wake word to become active and perform certain tasks. Amazon’s Echo, Google’s Home, and Apple’s HomePod are types of smart assistants.

-

Virtual Digital Assistants: These are automated software applications or platforms that assist the user by understanding natural language in either written or spoken form.

-

Chatbot: Text is the main way to get assistance from a chatbot. Chatbots can simulate a conversation with a human user. Many companies use them in the customer service sector to answer basic questions and connect with a live person if necessary.

-

Voice Assistant: The key here is voice. A voice assistant is a digital assistant that uses voice recognition, speech synthesis, and natural language processing (NLP) to provide a service through a particular application.

For the purpose of this discussion, the term voice assistant will be used interchangeably with the following related terms: intelligent personal assistant, automated personal assistant, smart assistant, and virtual digital assistant.

The Uses of Voice Assistants

Many devices we use every day utilize voice assistants. They’re on our smartphones and inside smart speakers in our homes. Many mobile apps and operating systems use them. Additionally, certain technology in cars, as well as in retail, education, healthcare, and telecommunications environments, can be operated by voices.

The Growth of Voice Assistants

Technology is constantly advancing and changing, and the voice assistant market will progress along with it. In April 2015, the research firm Gartner predicted that by the end of 2018, 30 percent of interactions with technology would be through “conversations” with smart machines, many of them by voice.

Tractica is a market intelligence firm that focuses on human interaction with technology. Their reports say unique consumer users for virtual digital assistants (which they define as automated software applications or platforms that assist the human user through understanding natural language in written or spoken form) will grow from more than 390 million worldwide users in 2015 to 1.8 billion by the end of 2021. The growth in the business world is expected to increase from 155 million users in 2015 to 843 million by 2021. With that kind of projected growth, revenue is forecasted to grow from $1.6 billion in 2015 to $15.8 billion in 2021.

According to Global Market Insights, Inc., between 2016 and 2024, the market share for the technology will grow at an annual rate of almost 35 percent. More and more sectors of the economy, like healthcare and the automotive industry, are finding uses for the speech recognition technology in addition to those found in devices like smart speakers and phones.

Popular Voice Assistants

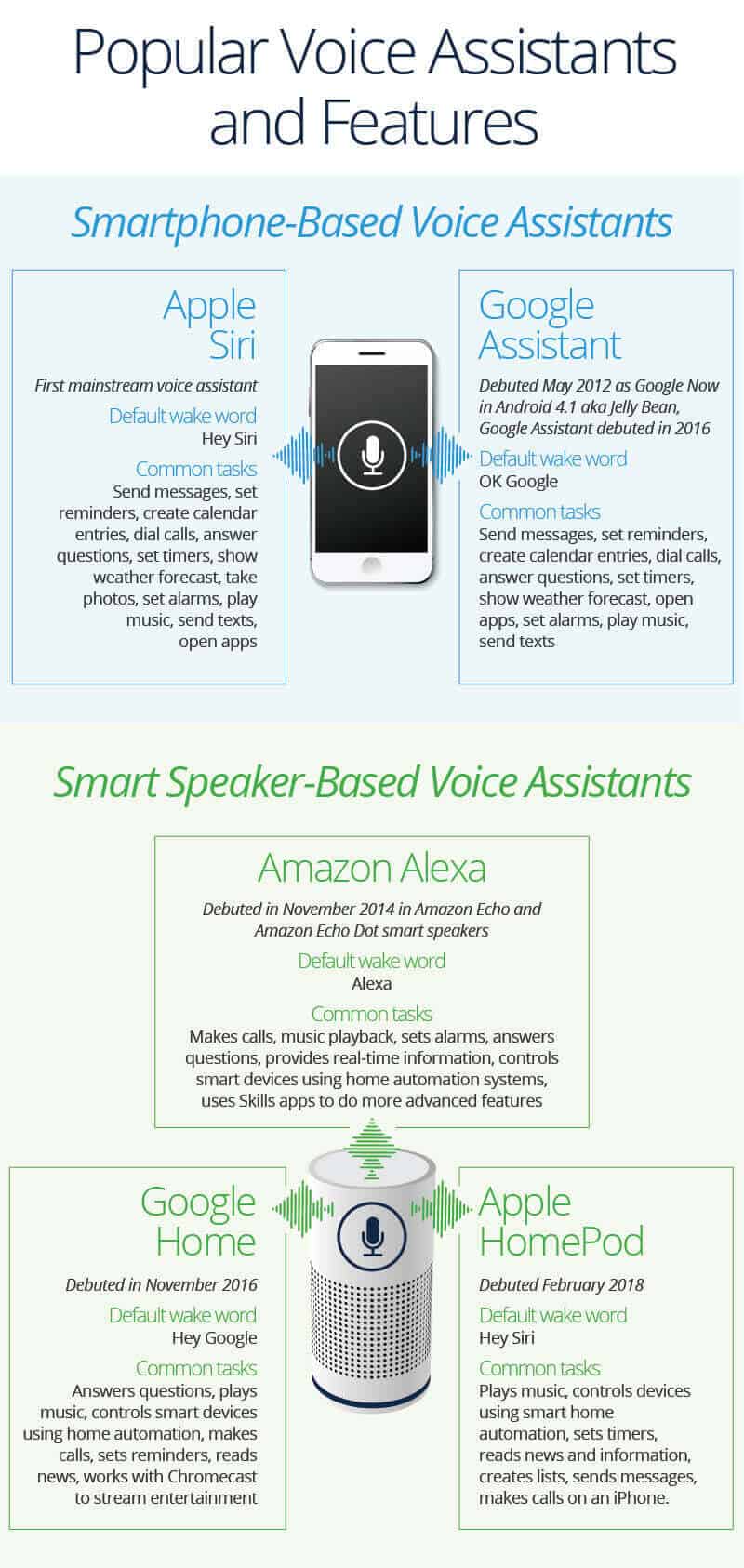

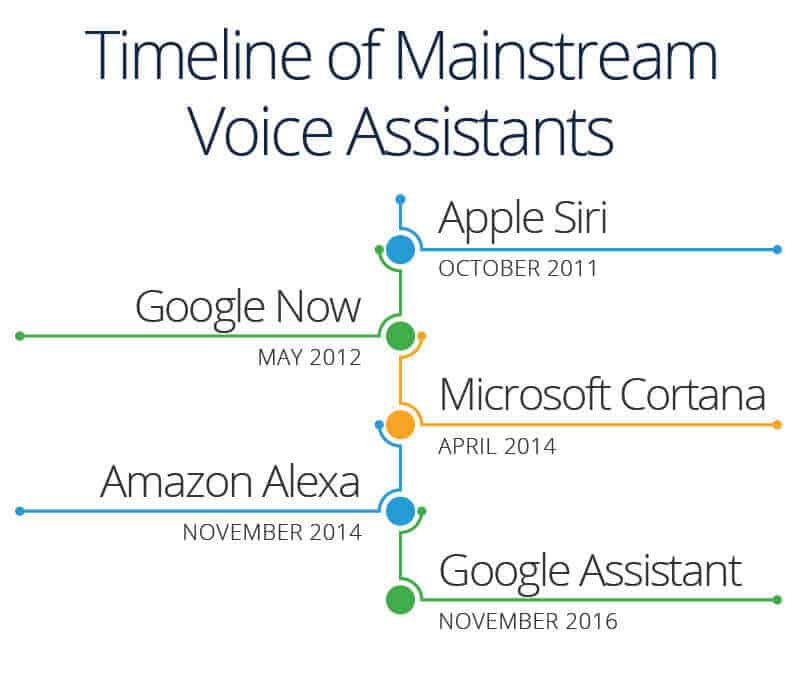

Siri by Apple became the first digital virtual assistant to be standard on a smartphone when the iPhone 4s came out on October 4, 2011. Siri moved into the smart speaker world when the HomePod debuted in February 2018.

Google Now (which became Google Assistant) on the Android platform followed. It also works on Apple’s iOS, but has limited functionality.

Then the smart speakers came along, and “Alexa” and “Hey Google” became a part of many household conversations. Alexa by Amazon is part of the Echo and the Dot. Google Assistant is part of the Google Home.

Samsung has Bixby. IBM has Watson. Microsoft has Cortana on its Windows 10, Xbox One machines, and Windows phones, and Nuance has Nina. Facebook used to have M, but its usage in the Facebook Messenger app ended in January 2018.

By default, most of the voice assistants have somewhat female-sounding voices, although the user can change them to other voices. Many people refer to Siri, Alexa, and Cortana as “she” and not “it.”

Developers are constantly creating new features for voice assistants, which further integrates them into our lives. In the 2013 movie Her, a man developed such an intense relationship with his female voice assistant that he fell in love with her. Critics loved the film, and it received numerous award nominations, including Academy Award and Golden Globe nominations.

What Is an Intelligent Personal Assistant?

An intelligent personal assistant can help someone with basic tasks. They often understand natural language and can help with things like creating meeting requests, reporting a sports score, and sharing the weather forecast. Intelligent personal assistants have access to a large amount of information on a device or online, which enables them to perform simple tasks.

Other terms for an intelligent personal assistant include chatbot, automated personal assistant, or automated virtual personal assistant.

Siri, Google Assistant, Cortana, Amazon Alexa, and others are examples of intelligent personal assistants.

The History of Voice Assistants

Voice recognition technology was around long before Apple’s Siri debuted in 2011. At the Seattle World’s Fair in 1962, IBM presented a tool called Shoebox. It was the size of a shoebox and could perform mathematical functions and recognize 16 spoken words as well as digits 0-9.

In the 1970s, scientists at Carnegie Mellon University in Pittsburgh, Pennsylvania — with the substantial support of the United States Department of Defense and its Defense Advanced Research Projects Agency (DARPA) — created Harpy. It could recognize 1,011 words, which is about the vocabulary of a three-year-old.

Once organizations came up with inventions that could recognize word sequences, companies began to build applications for the technology. The Julie doll from the Worlds of Wonder toy company came out in 1987 and could recognize a child’s voice and respond to it.

Throughout the 1990s, companies like IBM, Apple, and others created items that used voice recognition. Apple began building speech recognition features into its Macintosh computers with PlainTalk in 1993. In April 1997, Dragon came out with Dragon NaturallySpeaking, which was the first continuous dictation product. It could understand about 100 words per minute and turn it into text. Medical dictation devices were one of the earliest adopters of voice recognition technology.

Technology companies are working to create increasingly sophisticated technology that will automate more processes and tasks we do throughout the day. Even Siri, Google Assistant, and Alexa can “learn” new words and tasks.

How Do Artificial Intelligence Assistants Interact with People?

As technology evolves, the ways people interact with it also changes. Think about how internet searches have become easier. It wasn’t long ago that an internet search had to be very specific and would often yield strange and unrelated results. Now, it seems like search engines, such as Google, can almost read your mind and know exactly what you are looking for. Engines understand context and the intent of your search.

Artificial intelligence assistants have also evolved. Early on, text was the only way to interact with an assistant app (typing in a phrase triggered a response). Now, voice has taken over.

Assistant apps or smart speakers are always listening for their wake words. By default, the words “Hey Siri,” “OK Google,” “Hey Google,” and “Alexa” are the standards on their respective devices, but users can personalize their wake words to some degree. “Alexa” can become “Echo,” “Amazon,” or simply “computer.” The ability to make these adjustments can be especially helpful if someone named Alex or Alexis lives in the home.

Wake words rely on a special algorithm that is always listening for a particular word or phrase so that a phone, smart speaker, or something else can begin communicating with a server to do its job. Wake words need to be long enough to be distinct, easy for a human to speak, and simple for a machine to recognize. This is why you cannot change your wake word to anything you want it to be.

Voice assistants don’t really “understand” what you’re saying — they just listen for their wake word and then begin communicating with a server to complete a task. NLP is a form of artificial intelligence that helps technology interpret human language.

Voice Assistants on Our Phones

Voice assistants allow us to do a variety of tasks hands-free, which is a major reason many people like using them, especially on their phones. Apple has Siri. Google phones and most Androids have Google. Samsung has Bixby. Windows phones have Cortana.

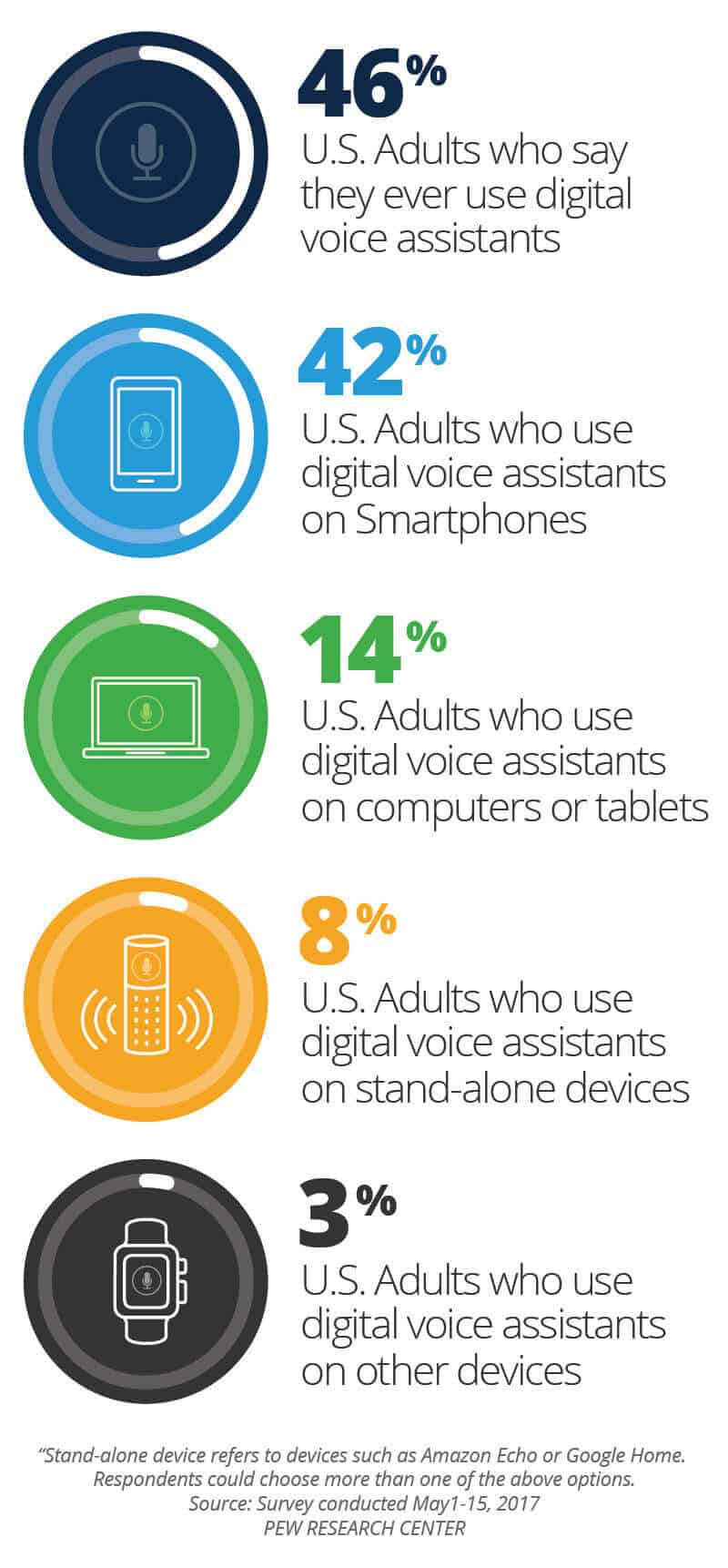

A Pew Research Center survey in May 2017 showed that nearly half of all adults in the United States use voice-controlled digital assistants on their smartphones and other devices.

Voice assistants can make calls, send text messages, look things up online, provide directions, open apps, set appointments on our calendars, and initiate or complete many other tasks.

With the addition of separate apps on the phone, our voice can be a type of remote control for our lives. We can unlock cars and homes, turn on lights, adjust the thermostat, change the television channel, and much more.

Voice Assistants in the Home: The Rise of the Smart Speaker

When Siri debuted on iPhones in 2011, she changed the world, transforming the way we used our phones and other technology. What Siri did for phones, Alexa did for homes, initiating the rise of the smart speaker.

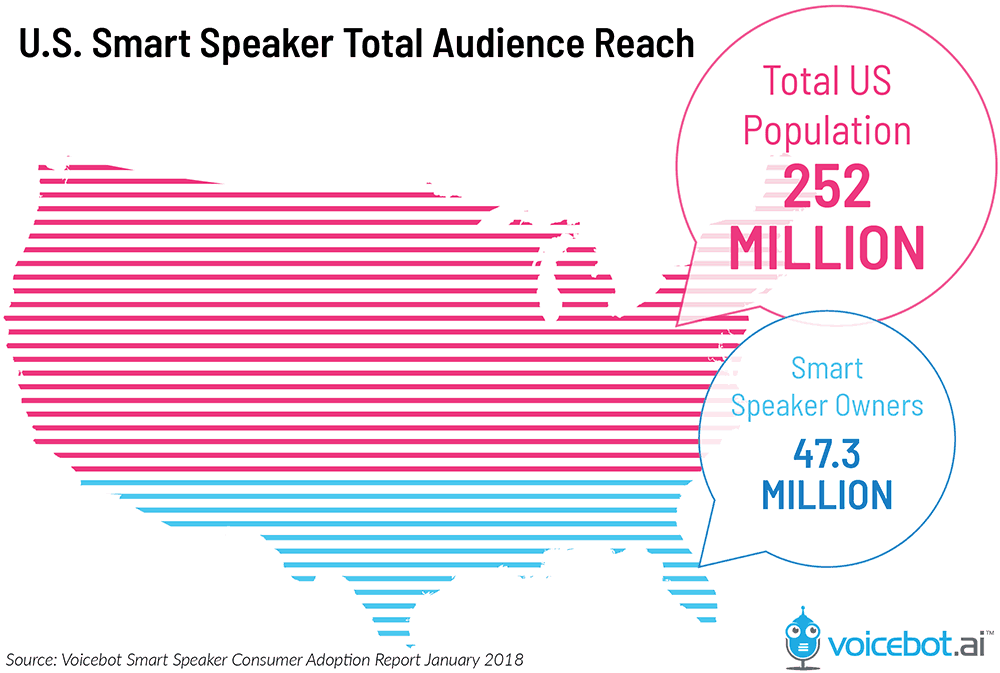

The Voicebot Smart Speaker Consumer Adoption Report 2018 revealed that almost 20 percent of U.S. adults (about 47.3 million) had a smart speaker in their home. Based on responses in the survey, that number is expected to rise rapidly.

“It’s growing faster than even the web and mobile, and the adoption is faster. I think people are more open to new technology than they were before. It doesn’t seem foreign to us to talk to something. Voice is a very convenient way to communicate, especially with technology. The adoption is pretty remarkable across all age groups,” Mutchler says. Also, smart speakers are cheaper than smartphones, so it’s less of an investment to try one.

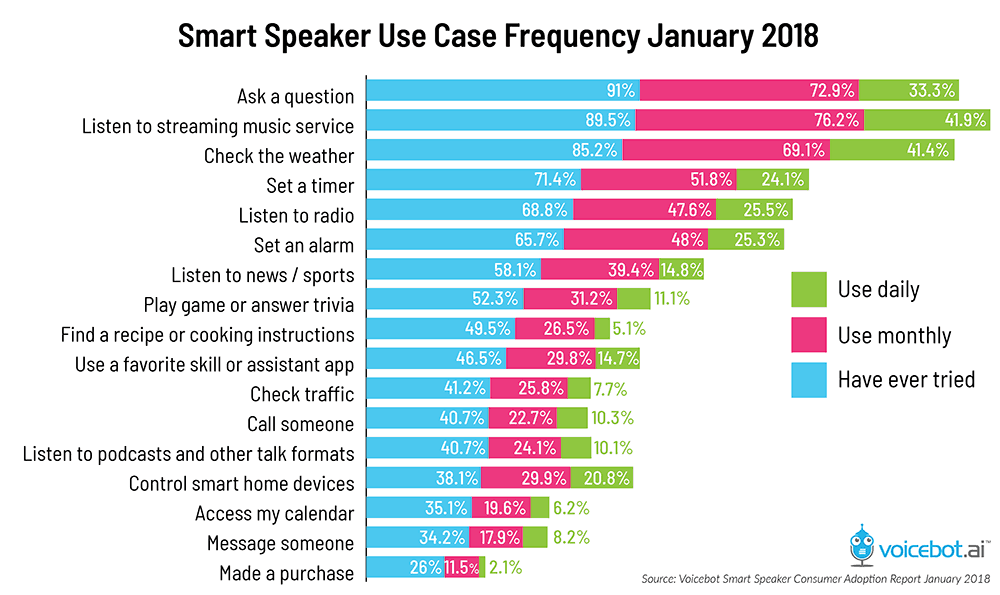

People use smart speakers for a variety of functions. At the bottom of the utilization chart below, you’ll notice that only 26 percent of smart speaker owners make a purchase using the smart speaker. However, almost 12 percent of those users purchase something monthly, so there is plenty of room for growth.

“People need to get comfortable with using voice assistants to order stuff,” Mutchler notes, adding that it’s easier to order things like toilet paper than it is to order something like a ski boot when using a voice assistant. With a voice assistant, you can’t see a boot or read a description about it. You have to blindly trust the recommendation, which is difficult for many people to do.

Voice Assistant Usage for Businesses

Even as the home market for smart speakers is booming, the business world has been slow to adopt the technology. But, it’s coming.

But voice assistants could change the way many places do business. Lucas uses the example of a laboratory where people have to wear thick gloves and bodysuits for safety and contamination reasons. It isn’t practical for them to type anything or push buttons to make something happen, but voice technology could change everything by automating tasks.

Also, many tasks that require automation are not readily available. “Companies need to build a specific skill that a business wants. They do not exist off the shelf,” Lucas emphasizes. In order to keep development costs down, the applications should be generic enough to perform a wide variety of business tasks.

Some business systems do not allow the technology. For example, some purchasing departments require payment with invoices and purchase orders, so those companies cannot utilize the technology to order something directly that is popular with smart speakers.

There are also hurdles because voice technology is far ahead of regulations and requirements. Unlike general consumers, businesses need to consider things like access authorization, archiving, and records management.

For the companies that do embrace the technology, many employees use smart speakers for various tasks during meetings. If someone needs to know a fact or an address, the voice assistant can search for that data and give a response. It can also take notes, record action items, set meetings on calendars, and create to-do and follow-up lists, all of which save time and keep people focused on the meeting.

“A lot of what this comes down to is context switching. If you have one conversation, go to do something else, and then try to come back, you lose something,” Lucas says. Being able to ask a voice assistant for a sales figure or a report eliminates the need for a person who is in the meeting to take their attention away from the meeting to find a report.

In addition, if AI assistants have access to company databases, they can compile statistics, automate tasks, and turn dictations into text reports.

“I think the voice is going to be the single biggest game changer since the web in terms of how consumers interact with services. Voice assistants are going to take people away from screens,” Lucas predicts. “This is going to take years. We’re still in day one with this stuff, which is exciting. So many things have been consumer-driven first and then moved into the business world,” he adds.

The Drawbacks of Voice Assistants

As the acceptance and usage of voice assistants continues to grow, it is only natural for some people to have reservations about using them. Below, we discuss some of the major issues regarding voice assistants.

-

Privacy: Privacy is a concern, especially involving smart speakers. While waiting for a wake word, smart speakers are always listening. On a smartphone, pressing a button or opening an app activates the assistant. Once you wake it up, it begins recording audio clips of what you say. These clips represent the files that go to a server to process the audio and formulate a response. The real brains are not in the little speakers in our homes: They’re on a massive server somewhere else. What the speaker sends is on an encrypted connection. Speakers do not record anything prior to the wake words.

“People confuse ‘always listening’ with ‘always recording,’” says Mutchler of Voicebot.ai. “The genius of [smart speakers] is they can remove background noise and single out the wake word,” she continues. Only then do they begin recording.

Smart speakers and other AI assistants, like those on a smartphone, save these recordings and allow the user to go into their account and delete them.

There are also questions about what can happen with those recordings. One situation that raises these privacy concerns is using the recordings as possible evidence in a criminal investigation. Back in 2016, detectives in an Arkansas murder case found an Amazon Echo linked to many smart home devices at a murder scene. Police seized the Echo and tried to get information from it by serving a warrant to Amazon for records of any recordings on the device. Amazon did not release the information, and it is unclear what law enforcement expected to get from the smart speaker and its files.

Laws surrounding information on our phones and devices are struggling to keep up with the ever-changing technology and how we use it. There are even questions about whether smart speakers and other devices should have a mechanism to report dangerous words, search patterns, or activity to authorities. What happens if someone asks a voice assistant to do something illegal? Should it be able to override our commands? These issues and others are sure to be the subjects of new laws as the technology continues to change.

Even though smart speaker usage is growing amongst all age groups, younger people don’t seem to have as much of a problem with privacy as older ones. “I think we’re so used to having our privacy invaded for convenience,” Mutchler says.

Lucas points out that twenty years ago, the thought of having an ever-listening speaker in our homes wouldn’t have boded well with consumers. But our lines of concern are different than they once were. “You’ll accept all of these things because they are helpful,” he says. -

Accuracy: Voice assistants don’t always understand what we are asking. Sometimes, it’s how we speak. Other times, it is simply because the artificial intelligence hasn’t yet learned how to do something.

A 2017 report from the consulting company Stone Temple used 5,000 questions to test the accuracy of Google Assistant, Cortana, Siri, and Alexa. Google got the most correct responses.

Cannot find page 15 table to insert here.

There is also a question concerning answer sources. During an online search, a user can select results, note the source, and click for more information. When asking a voice assistant a question, the answer usually comes back as fact, often without stating the source.

The “conversations” people have with their voice assistants are not really two way at all. To ask a follow-up question, you need to wake the assistant up again. Also, real people need to monitor the artificial intelligence in order for it to “learn” new things. -

Hackability and Security: Even though voice assistants communicate with their servers using encrypted connections, there is still a concern about hackability and security.

In early 2018, some users of Amazon’s Echo reported it would suddenly emit an evil laugh for no reason. In the beginning, people thought someone had hacked into their smart speakers. Amazon investigated the problem and later announced that the Echo had been hearing words similar to “Alexa laugh,” so it began laughing. As a response, Amazon disabled the reaction and changed Alexa’s response to a user’s request that it laugh to “Sure, I can laugh,” followed by laughter.

Since some smart speakers can recognize and respond to any nearby voice, a guest can check or alter your calendar or your contacts. Also, an annoyed neighbor can set an alarm for an early morning wake-up call by yelling through your door.

With regard to this capability, be careful not to link door locks and security systems to voice assistants. If you do, a burglar could just as easily say “unlock the front door” or “disable security cameras” as you could.

Someone could also use your device to make purchases without you knowing about it. In order to avoid this possibility, Alexa allows you to set a PIN confirmation option for voice purchases.

For business, the security concerns are a bit different. Lucas uses the following example: In the past, burglars would break into the CEO’s office and steal documents, like sales data and earnings reports. Businesses adapted security procedures. Then, companies began storing information on computers, so hacking into files became the crime. Businesses adapted security procedures. Now, a thief can simply ask a voice assistant for critical data. Security procedures need to adapt. “I think it’s quite likely you will see enterprise solutions coming out. They might be based on consumer technology but with add-ons,” Lucas says.

The Battle of the Bots

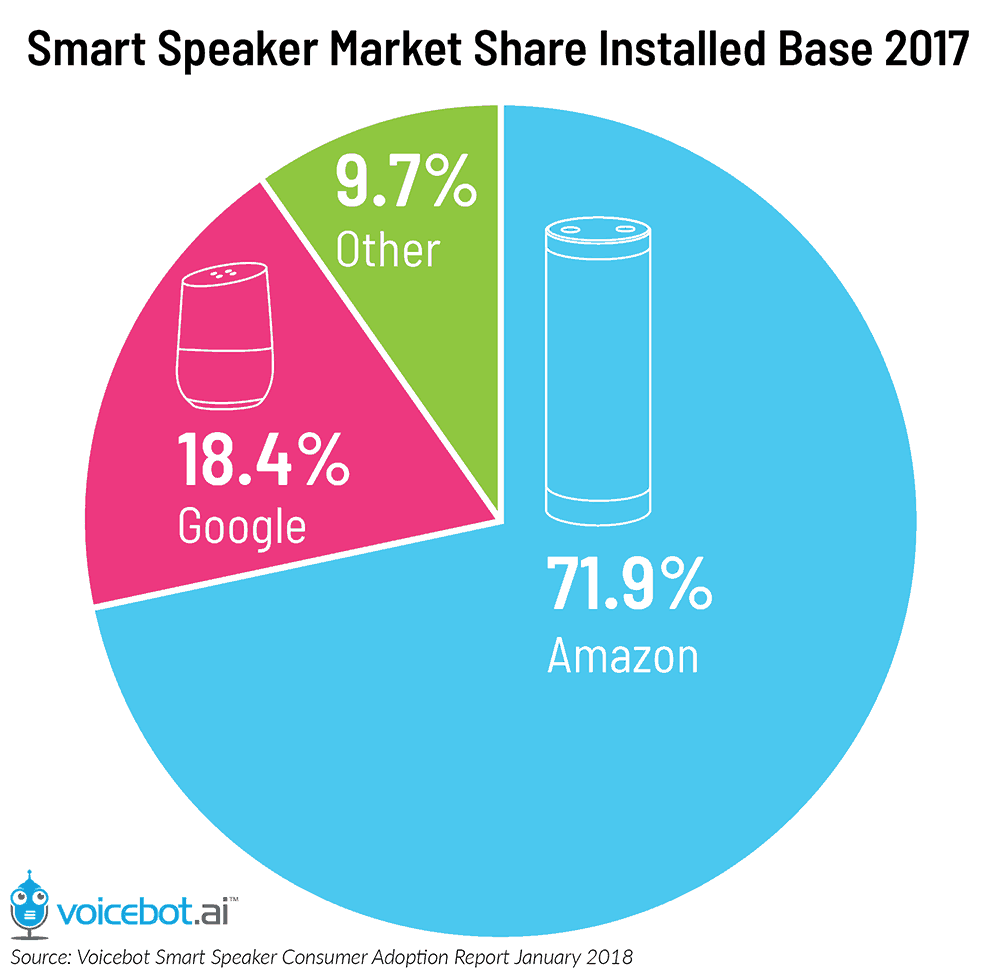

The battle for voice assistant market share is fierce. Amazon was first with the Echo, but Google burst onto the scene and was able to gain market share quickly in the United States.

Google had experience with searches, languages, and data, so they had the ability to quickly jump into the smart speaker market, Mutchler explains. Apple’s HomePod is lagging behind, mostly because of features, the same factor that hinders Siri on iPhones. “She’s not open to developers. She’s very restrained,” Mutchler says.

Google Assistant spoke many languages from the very beginning, something Alexa had to learn how to do. Google Home was the first to go on sale outside the U.S., and Amazon Echo was the first to offer commerce. Both companies have always understood multiple voices and can differentiate between them. Siri has not.

When one company unveils a new feature, the others are usually not too far behind. In the beginning, making calls to a phone was not something smart speakers could do. Now, they can. Today, all options can play music, add items to calendars, perform searches, send messages, answer questions, control some smart devices, and much more. “It’s a constant feature game. Creating a voice assistant is more difficult than people think. They [competitors] make each other better,” Mutchler emphasizes.

Smart speaker companies do not make money on the sales of the hardware; the money is in how we will use smart speakers in the future. The key is getting people on board with a brand early, much like with smartphones. “Once you start with one assistant, you might not go back and try another one. It’s difficult to get people to switch,” Mutchler suggests.

Building a Bot

If the available bots don’t perform all the tasks you want them to, it is possible to build your own. For a text-based bot, you don’t even need to know how to code. There are apps available to help people create assistants that can automate tasks or events.

Creating a voice activated bot is much more difficult. That’s where companies like Converse.AI. “We make it easier for non-developers to build and automate the services that they need. No coding experience is required,” Lucas says.

A text-based bot automates tasks and interacts with customers. It can also help answer questions for clients, access databases, and help customers help themselves. For more information about customer self-service portals, many of which use bots, read "Customer Service Portals: Help Your Users Help Themselves."

If you choose to create a bot, make sure it’s representative of your brand. Also, make sure it works, since technology will not do your business any good if it doesn’t help customers. “The danger is that people will try it, it won’t work, and they won’t go back,” Mutchler warns. She mentions Samsung’s Bixby, which debuted on the Galaxy S8 phone but was not fully functional when it came out. Many customers tried it a few times, then asked Samsung to develop a way to disable it, which they did in a software update.

Here are some other elements to consider when building a bot:

-

Remember the end user.

-

Choose useful features.

-

Give it personality.

-

Integrate it with various platforms.

Building a bot takes time, so it’s better not to rush it. Focus on doing a few things extraordinarily well instead of trying to do many things (and, therefore, doing them unsuccessfully). Also, remember to update the bot as necessary. It’s not a “build it and leave it” venture.

The Future of Voice Assistants

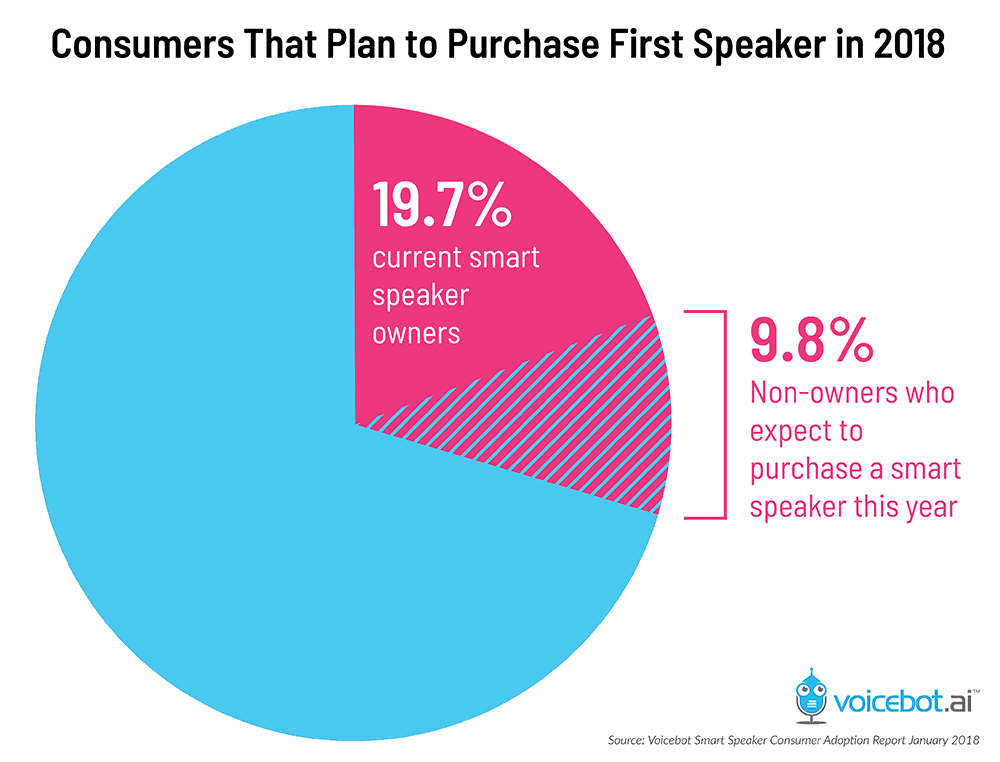

The number of people using voice assistants is expected to grow. According to the Voicebot Smart Speaker Consumer Adoption Report 2018, almost ten percent of people who do not own a smart speaker plan to purchase one. If this holds true, the user base of smart speaker users will grow 50 percent, meaning a quarter of adults in the United States will own a smart speaker.

Smart speaker sales are expanding in other parts of the world, meaning they need to “learn” how to “understand” languages, accents, dialects, slang, and nuances in each country in which they are sold. Chinese companies are developing their own smart speakers. “The rest of the world is behind the U.S. and will catch up pretty quickly,” Mutchler says.

Voice assistants are always improving and “learning.” AI companies use data from existing systems to improve what assistants can do. Lucas believes that ultimately, the voice assistant might get so smart that it will automatically order a pizza if you say you’re hungry. It will use existing data from your previous purchases to come to the conclusion that saying you’re hungry equals ordering a pizza.

The experts predict that voice assistants will improve in many other ways. As described in a 2017 article for The Atlantic, “A subfield of AI called computational creativity forges algorithms that can write music, paint portraits, and tell jokes.” These capabilities will help smart speakers “show emotion” and “think” for themselves without being scripted. Systems that explain why they did what they did and what they’re going to do next are also on the horizon.

Voice assistants are not going anywhere. “I think people thought of it as a fad, but it’s not. It’s changing what people do in their homes. Voice assistants will grow and are here to stay,” Mutchler says. “I think they [voice assistants] will be in everything, and the smart speaker might fade away in a few years because many technologies, like televisions and refrigerators, will have their own voice assistants. The kids today won’t understand that there was a world where you couldn’t talk to things,” she concludes.

The Future of Automated Work with Smartsheet

Empower your people to go above and beyond with a flexible platform designed to match the needs of your team — and adapt as those needs change.

The Smartsheet platform makes it easy to plan, capture, manage, and report on work from anywhere, helping your team be more effective and get more done. Report on key metrics and get real-time visibility into work as it happens with roll-up reports, dashboards, and automated workflows built to keep your team connected and informed.

When teams have clarity into the work getting done, there’s no telling how much more they can accomplish in the same amount of time. Try Smartsheet for free, today.